中国科学院大学数学科学学院, 北京 100049

摘要: 提出3种基于离散观测数据的随机微分方程参数估计的方法。第1种方法应用于线性随机微分方程。推导出这类方程的真解的相关运算服从的分布,使观测数据的运算也服从此分布,由此来估计漂移系数与扩散系数中的未知参数。第2种方法用于It?型随机微分方程。推导出Euler-Maruyama格式的数值解的相关运算服从的分布,使观测数据的运算服从此分布,由此来估计参数。第3种方法用于Stratonovich型随机微分方程。推导出中点格式的数值解的相关运算服从的分布,使观测数据的运算服从此分布,以此来估计参数。数值实验验证了这3种方法的有效性。数值实验显示,Euler-Maruyama格式参数估计的误差约为O(h0.5)阶,中点格式参数估计的误差约为O(h)阶,其中h是数值方法的时间步长。我们提出的3种估计方法均比文献中已有的EM-MLE方法更精确。

关键词: 随机微分方程参数估计Euler-Maruyama格式中点格式

Consider the stochastic differential equation (SDE)

| $\begin{array}{*{20}{c}}{{\rm{d}}X\left( t \right) = f\left( {X\left( {\omega, t} \right), \theta } \right){\rm{d}}t + \sigma \left( {X\left( {\omega, t} \right), \gamma } \right){\rm{d}}B\left( t \right), }\\{X\left( {{t_0}} \right) = {X_0}, }\end{array}$ | (1) |

The problem of parameter estimation in diffusion processes based on discrete observations has been studied by some authors. Morel et al.[1] first studied estimation in discretely observed diffusions. Breton[2] used approximate maximum likelihood estimation (MLE), and studied asymptotic properties of the descritized versions of MLE for linear parametric stochastic differential equations. Dorogovcev[3] used conditional least squares estimation, and gave sufficient conditions for weak consistency of the estimator. Prakasa[4] showed that Bayes estimators and MLE estimators of SDEs have the same asymptotic properties and asymptotic distributions for suitable class of loss functions of some special types. Combining statistical methods with numerical methods, Pedersen[5] used numerical approximationsbased on iterations of the Gaussian transition densities emanating from Euler-Maruyama scheme and studied approximate maximum likelihood. Papaspiliopoulos and Beskos[6] deduced the distribution of certain operation of the numerical solution of the stochastic differential equations by Euler-Maruyama scheme, and then used the maximum likelihood method to estimate the parameters based on observations, which we call the EM-MLE estimator. Inspired by their work, we propose estimators by deducing the distributions of certain relevant operations of the exact solution, as well as numerical solutions resulted from the Euler-Maruyama and the midpoint schemes of the SDEs. Instead of the maximum likelihood method in Ref.[6], we use directly the deduced distributions together with tree-like observations to estimate the parameters. Errors of our estimators are compared with that of the EM-MLE estimator in the numerical experiments.

The contents are arranged as follows. In section 1, we derive the distribution of an operation of the exact solution of constant-coefficient homogeneous linear SDE, and deseribe the method of parameter estimation based on this distribution. In section 2 we propose two methods of parameter estimation for SDEs of It

1 Method based on the exact solutionConsider the constant-coefficient homogeneous linear SDE

| $\begin{array}{*{20}{c}}{{\rm{d}}X\left( t \right) = aX\left( t \right){\rm{d}}t + bX\left( t \right){\rm{d}}B\left( t \right), }\\{X\left( 0 \right) = {X_0} \ne 0, }\end{array}$ | (2) |

| $X\left( t \right) = {X_0}\exp \left[{\left( {a-\frac{1}{2}{b^2}} \right)t + bB\left( t \right)} \right].$ | (3) |

| $X\left( {{t_i}} \right) = {X_0}\exp \left[{\left( {a-\frac{1}{2}{b^2}} \right){t_i} + bB\left( {{t_i}} \right)} \right], $ | (4) |

| $X\left( {{t_{i + 1}}} \right) = {X_0}\exp \left[{\left( {a-\frac{1}{2}{b^2}} \right){t_{i + 1}} + bB\left( {{t_{i + 1}}} \right)} \right].$ | (5) |

| $\begin{array}{l}X\left( {{t_{i + 1}}} \right) = X\left( {{t_i}} \right)\exp \left[{\left( {a-\frac{1}{2}{b^2}} \right)h + } \right.\\\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\left. {b\left( {B\left( {{t_{i + 1}}} \right)-B\left( {{t_i}} \right)} \right)} \right], \end{array}$ | (6) |

| $B\left( {{t_{i + 1}}} \right) - B\left( {{t_i}} \right)\tilde{\ }N\left( {0, h} \right).$ |

| $\begin{array}{l}\;\;\;\;\;\;\;\;\ln\left| {X\left( {{t_{i + 1}}} \right)} \right| - \ln\left| {X\left( {{t_i}} \right)} \right|\\ = \left( {a - \frac{1}{2}{b^2}} \right)h + b\left( {B\left( {{t_{i + 1}}} \right) - B\left( {{t_i}} \right)} \right)\\ \sim N\left( {\left( {a - \frac{1}{2}{b^2}} \right)h, {b^2}h} \right).\end{array}$ | (7) |

| $\begin{array}{*{20}{c}}{X\left( {{\omega _1}, {t_0}} \right), X\left( {{\omega _1}, {t_1}} \right), \cdots, X\left( {{\omega _1}, {t_N}} \right), }\\ \vdots \\{X\left( {{\omega _m}, {t_0}} \right), X\left( {{\omega _m}, {t_1}} \right), \cdots, X\left( {{\omega _m}, {t_N}} \right), }\end{array}$ |

On the first subinterval [t0, t1], we calculate

| $\begin{array}{*{20}{c}}{\ln \left| {X\left( {{\omega _1}, {t_1}} \right)} \right| - \ln \left| {X\left( {{\omega _1}, {t_0}} \right)} \right|, }\\{\ln \left| {X\left( {{\omega _2}, {t_1}} \right)} \right| - \ln \left| {X\left( {{\omega _2}, {t_0}} \right)} \right|, }\\ \vdots \\{\ln \left| {X\left( {{\omega _m}, {t_1}} \right)} \right| - \ln \left| {X\left( {{\omega _m}, {t_0}} \right)} \right|.}\end{array}$ | (8) |

| $\begin{array}{*{20}{l}}{\left( {a - \frac{1}{2}{b^2}} \right)h = \frac{1}{m}\sum\limits_{j = 1}^m {\ln \left| {X\left( {{\omega _1},{t_1}} \right)} \right|} - }\\{\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\ln \left| {(X\left( {{\omega _j},{t_0}} \right)} \right|,}\\{{b^2}h = \frac{1}{{m - 1}}\sum\limits_{j = 1}^m {(\ln \left| {X\left( {{\omega _i},{t_1}} \right)} \right|} - }\\{\;\;\;\;\;\;\;\;{{\left. {\ln \left| {X\left( {{\omega _j},{t_0}} \right)} \right| - \left( {a - \frac{1}{2}{b^2}} \right)h} \right)}^2}}\end{array}$ |

By performing the same procedure on the subsequent small intervals [t1, t2], …, [tN-1, tN], we get a sequence of estimators

| $\hat a = \frac{1}{N}\sum\limits_{i = 1}^N {{{\hat a}_i}}, \hat b = \frac{1}{N}\sum\limits_{i = 1}^N {{{\hat b}_i}} .$ | (9) |

2.1 Method using the Euler-Maruyama schemeConsider the SDE of It

| ${X_{i + 1}} - {X_i} = hf\left( {{X_i}, \theta } \right) + \left[{B\left( {{t_{i + 1}}} \right)-B\left( {{t_i}} \right)} \right]\sigma \left( {{X_i}, \gamma } \right).$ | (10) |

| ${X_{i + 1}} - {X_i} \sim N\left( {hf\left( {{X_i}, \theta } \right), h{\sigma ^2}\left( {{X_i}, \gamma } \right)} \right).$ | (11) |

| $\frac{1}{m}\sum\limits_{j = 1}^m {X\left( {\omega _j^0, {t_1}} \right)} = hf\left( {{X_0}, \theta } \right) + {X_0}$ |

| $\frac{1}{{m - 1}}\sum\limits_{j = 1}^m {{{\left( {X\left( {\omega _j^0, {t_1}} \right) - hf\left( {{X_0}} \right)} \right)}^2}} = h{\sigma ^2}\left( {{X_0}, \gamma } \right), $ |

Then, we fix an arbitrary X(ωk0, t1)=:X1 (k ∈ {1, …, m}), and observe other m values X(ω11, t2), X(ω21, t2), …, X(ωm1, t2), each resulting from evolving time h from the given value X(ωk0, t1)=X1. Let

| $\frac{1}{m}\sum\limits_{j = 1}^m {X\left( {\omega _j^1, {t_2}} \right)} = hf\left( {{X_1}, \theta } \right) + {X_1}, $ |

| $\frac{1}{{m - 1}}\sum\limits_{j = 1}^m {{{\left( {X\left( {\omega _j^1, {t_2}} \right) - hf\left( {{X_1}} \right)} \right)}^2}} = h{\sigma ^2}\left( {{X_1}, \gamma } \right).$ |

Analogously, we can get successively a sequence of estimates

| $\hat \theta = \frac{1}{N}\sum\limits_{i = 1}^N {{{\hat \theta }_i}}, \hat \gamma = \frac{1}{N}\sum\limits_{i = 1}^N {{{\hat \gamma }_i}} .$ | (12) |

| $\begin{array}{*{20}{c}}{{\rm{d}}X\left( t \right) = f\left( {X\left( {\omega, t} \right), \theta } \right){\rm{d}}t + \sigma \left( {X\left( {\omega, t} \right), \gamma } \right) \circ {\rm{d}}B\left( t \right), }\\{X\left( {{t_0}} \right) = {X_0}, }\end{array}$ | (13) |

| $\begin{array}{l}{X_{i + 1}} - {X_i} = f\left( {\frac{{{X_{i + 1}} + {X_i}}}{2}, \theta } \right)h + \\\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\;\sigma \left( {\frac{{{X_{i + 1}} + {X_i}}}{2}, \gamma } \right){\xi _i}\sqrt h .\end{array}$ | (14) |

| $\frac{{{{\tilde X}_{i + 1}} - {X_i} - f\left( {\frac{{{{\tilde X}_{i + 1}} + {X_i}}}{2}, \theta } \right)h}}{{\sigma \left( {\frac{{{{\tilde X}_{i + 1}} + {X_i}}}{2}, \gamma } \right)\sqrt h }} \approx {\xi _i} \sim N\left( {0, 1} \right), $ | (15) |

We use the same observation as described in section 2.1. According to (15), for the first time interval [t0, t1], we can let

| $\begin{array}{*{20}{c}}{\frac{1}{m}\sum\limits_{j = 1}^m {\frac{{X\left( {\omega _j^0, {t_1}} \right) - {X_0} - f\left( {\frac{{X\left( {\omega _j^0, {t_1}} \right) + {X_0}}}{2}, \theta } \right)}}{{\sigma \left( {\frac{{X\left( {\omega _j^0, {t_1}} \right) + {X_0}}}{2}, \gamma } \right)\sqrt h }} = 0, } }\\{\frac{1}{{m - 1}}\sum\limits_{j = 1}^m {{{\left( {\frac{{X\left( {\omega _j^0, {t_1}} \right) - {X_0} - f\left( {\frac{{X\left( {\omega _j^0, {t_1}} \right) + {X_0}}}{2}, \theta } \right)h}}{{\sigma \left( {\frac{{X\left( {\omega _j^0, {t_1}} \right) + {X_0}}}{2}, \gamma } \right)\sqrt h }}} \right)}^2}} }\\{ = 1, }\end{array}$ |

| $\hat \theta = \frac{1}{N}\sum\limits_{i = 1}^N {{{\hat \theta }_i}}, \hat \gamma = \frac{1}{N}\sum\limits_{i = 1}^N {{{\hat \gamma }_i}} .$ | (16) |

| $\begin{array}{*{20}{c}}{{\rm{d}}X\left( t \right) = \left( {f\left( {X\left( {\omega, t} \right), \theta } \right) + \frac{1}{2}\frac{{\partial \sigma }}{{\partial X}} \cdot } \right.}\\{\left. {\sigma \left( {X\left( {\omega, t} \right), \gamma } \right)} \right){\rm{d}}t + \sigma \left( {X\left( {\omega, t} \right), \gamma } \right){\rm{d}}B\left( t \right), }\\{X\left( {{t_0}} \right) = {X_0}, }\end{array}$ | (17) |

3 Numerical tests3.1 Estimation based on the exact solutionFor the linear SDE (2), let t0=0, T=1, X0=1. Suppose a and b are given. a and b will be estimated by using the method given in section 1 to test the ability of our estimator.We set the number of subintervals of time to be N=500 and the number of sample path observations to be m=100, and we perform five tests for five different groups of a and b values. The observation data are simulated by the exact solution (3). Our five group of numerical results

Table 1

| Table 1 Estimates based on the exact solution |

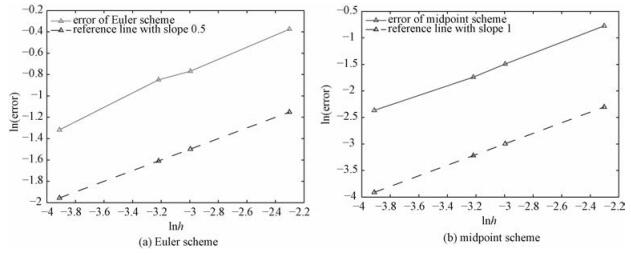

In Fig. 1(a), we plot the points (a, b) denoted by 'o', and the corresponding estimates (

Fig. 1

| Download: JPG larger image |

Fig. 1 Exact values and estimates of the parameter (a, b) | |

3.2 Estimation based on the numerical solutionsTo test the effect of the parameter estimations based on the Euler-Maruyama and the midpoint schemes, we first consider the linear SDE of Stratonovich sense

| $\begin{array}{*{20}{c}}{{\rm{d}}X\left( t \right) = aX\left( t \right){\rm{d}}t + bX\left( t \right) \circ {\rm{d}}B\left( t \right), }\\{X\left( 0 \right) = {X_0} \ne 0, }\end{array}$ | (18) |

| $\begin{array}{*{20}{c}}{{\rm{d}}X\left( t \right) = \left( {a + \frac{1}{2}{b^2}} \right)X\left( t \right){\rm{d}}t + bX\left( t \right){\rm{d}}B\left( t \right), }\\{X\left( 0 \right) = {X_0} \ne 0.}\end{array}$ | (19) |

The data are set as t0=0, T=1, X0=1, N=100, and m=500, and the observation data are generated by the exact solution of (18) and (19)

| $X\left( t \right) = {X_0}\exp \left( {at + bB\left( t \right)} \right).$ | (20) |

Table 2

| Table 2 Estimates based on the numerical solutions |

In Fig. 1(b) we plot the five points (a, b) denoted by 'o', and the corresponding estimates (

3.3 Error analysisIn this section, we analyze the relation between the error of estimate

Set X0=1, t0=0, T=1, a=3, b=1, and the number of observed sample paths m=500. We test four different values of the time step size of the numerical solutions, namely, h=0.02, 0.04, 0.05, and 0.1. In Table 3 listed are the estimated values (

Table 3

| Table 3 Estimates and the corresponding h values |

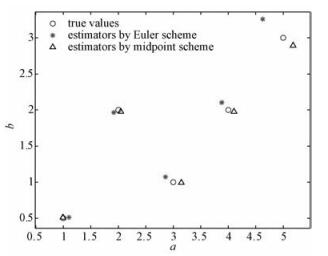

In Table 3, we observe an inereasing tendency of errore with h for both numerical schemes. For a clearer demonstration, we plot lne against lnh for the four different values of h and the corresponding errors e in Fig. 2, with Fig. 2(a) for the Euler-Maruyama scheme and Fig. 2(b) for the midpoint scheme. In Fig. 2, we can observe the order of the error with respect to h. The dashed reference line in Fig. 2(a) is of slope 0.5. The near parallelism between the reference line and the plotted lne-lnh line indicates that the order of the error e for the Euler-Maruyama scheme is about O(h0.5). Analogously, it can be inferred from Fig. 2(b) that the error e for the midpoint scheme is of order O(h), since the dashed reference line in Fig. 2(b) is of slope 1.

Fig. 2

| Download: JPG larger image |

Fig. 2 Error orders with respect to h | |

Meanwhile, it is interesting to note that the Euler-Maruyama method applied to the SDE (19) is of root mean-square convergence order 0.5, while the midpoint scheme applied to the SDE (18) is of root mean-square convergence order 1 ([7, 8]). Three facts imply that the estimate errors based on the numerical solutions may mainly result from the error of the numerical methods themselves. More accurate estimators might be obtained by applying higher-order numerical methods.

3.4 Error comparison with the EM-MLE estimatorFor the linear SDE (2), we apply our estimator based on the exact solution, which we call the exact solution estimator, and the EM-MLE estimator [6] to estimate the parameters (a, b), which are given as a=3, b=1. Moreover, set X0=1, t0=0, and T=1. We perform four tests with four different values of h, namely, h=0.02, 0.04, 0.05, and 0.1, and observe m=100 sample paths for each. Denote the estimate arising from our method by (

Table 4

| Table 4 Exact solution and EM-MLE estimators |

Table 4 shows that our exact solution estimator is more accurate than the EM-MLE estimator.

To compare our numerical solution estimators with the EM-MLE estimator, we consider the linear SDE of Stratonovich sense (18), to which we apply the midpoint scheme estimator, and the equivalent It

In Table 5, (

Table 5

| Table 5 Numerical solution and EM-MLE estimators |

3.5 Numerical tests on non-linear SDEsTo test the effect of our estimators on non-linear SDEs, we consider the following SDE

| $\begin{array}{*{20}{c}}{X\left( t \right) = a{X^2}\left( t \right){\rm{d}}t + b{X^2}\left( t \right) \circ {\rm{d}}B\left( t \right), }\\{X\left( {{t_0}} \right) = {X_0}, }\end{array}$ | (21) |

| $X\left( t \right) = \left( {a{X^2}\left( t \right) + {b^2}{X^3}\left( t \right)} \right){\rm{d}}t + b{X^2}\left( t \right){\rm{d}}B\left( t \right), $ | (22) |

We apply the midpoint estimator to (21), and the Euler-Maruyama estimator to (22). Set X0=0.5, t0=0, T=1, and h=0.02. We use the midpoint scheme with tiny step size h=0.001 to (21) to simulate the true solution of the system which serves as observation data for our tests, and we conduct m=500 samples. The estimation results with different sets of a and b are listed in Table 6, where

Table 6

| Table 6 Estimates with different a and b values |

Again, we plot the five groups of points (a, b), (

Fig. 3

| Download: JPG larger image |

Fig. 3 Exact values and estimates of the parameter (a, b) in the nonlinear SDE (21) or (22) | |

Then, we fixa=1 and b=2, but take h=0.02, 0.04, 0.05, and 0.1, to observe the order of the error

Table 7

| Table 7 Estimates and errors |

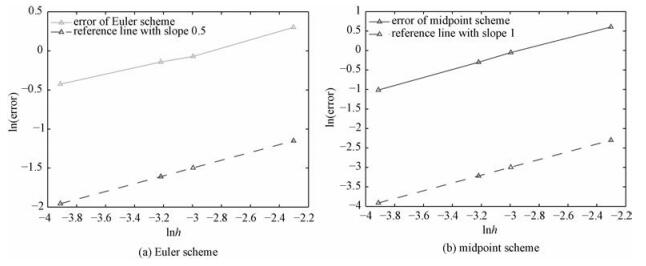

Figure 4(a) and Fig. 4(b) illustrate the ln|error| ~ ln h relationships arising from the Euler-Maruyama and the midpoint estimator, respectively.

Fig. 4

| Download: JPG larger image |

Fig. 4 Error orders with respect to h of non-linear SDE estimators | |

It is indicated that for the non-linear SDE (22) or its equivalent equation (21), the order of the error ee produced by the Euler-Maruyama estimator is about O(h0.5) while that by the midpoint estimator is about O(h). This is the same as the indication for the numerical results for the linear SDE in the previous subsections.

3.6 A remarkIt is observed from the above-performed numerical tests that, with the growth of the order of the numerical method, the estimation accuracy is improved. It is then naturally expected that higher order methods give better estimations. However, in high-order stochastic numerical schemes such as the weak order 2 It

4 Estimating multiple parametersUp to now we have considered estimation of 2 unknown parameters appearing in the drift and diffusion coefficients of a SDE. Actually, our methods can be extended to estimate multiple unknown parameters. Consider the following SDE

| $\begin{array}{*{20}{c}}{{\rm{d}}X\left( t \right) = f\left( {X\left( {\omega, t} \right), {\theta _1}, \cdots, {\theta _p}} \right){\rm{d}}t + }\\{\sigma \left( {X\left( {\omega, t} \right), {\gamma _1}, \cdots, {\gamma _q}} \right){\rm{d}}B\left( t \right), }\\{X\left( {{t_0}} \right) = {X_0}, }\end{array}$ | (23) |

| ${X_{i + 1}} - {X_i} \sim N\left( {hf\left( {{X_i}, \Theta } \right), h{\sigma ^2}\left( {{X_i}, \Gamma } \right)} \right), $ | (24) |

Given m observations at time

| $\begin{array}{*{20}{c}}{\frac{1}{m}\sum\limits_{j = 1}^m {X\left( {\omega _j^0, {t_1}} \right)} = hf\left( {{X_0}, \Theta } \right) + {X_0}, }\\{\frac{1}{{m - 1}}\sum\limits_{j = 1}^m {{{\left( {X\left( {\omega _j^0, {t_1}} \right) - {X_0} - hf\left( {{X_0}, \Theta } \right)} \right)}^2}} }\\{ = h{\sigma ^2}\left( {{X_0}, \Gamma } \right), }\\{\frac{1}{m}\sum\limits_{j = 1}^m {X{{\left( {\omega _j^0, {t_1}} \right)}^3}} = E\left( {X_1^3} \right) = \int\limits_{ - \infty }^{ + \infty } {{x^3}{\rho _1}\left( x \right){\rm{d}}x}, }\\ \vdots \\{\frac{1}{m}\sum\limits_{j = 1}^m {X{{\left( {\omega _j^0, {t_1}} \right)}^{p + q}}} = E\left( {X_1^{p + q}} \right) = \int\limits_{ - \infty }^{ + \infty } {{x^{p + q}}{\rho _1}\left( x \right){\rm{d}}x}, }\end{array}$ | (25) |

| $\begin{array}{l}g\left( t \right) = E{{\rm{e}}^{{\rm{i}}t{X_1}}} = \frac{1}{{\sqrt {2{\rm{\pi }}} {d_1}}}\int\limits_{ - \infty }^{ + \infty } {{{\rm{e}}^{{\rm{i}}tx}}\exp \left( { - \frac{{{{\left( {x - {c_1}} \right)}^2}}}{{2d_1^2}}} \right){\rm{d}}x} \\\;\;\;\;\;\;\; = {{\rm{e}}^{{\rm{i}}{c_1}t - \frac{{d_1^2{t^2}}}{2}}}, \end{array}$ | (26) |

There are (p+q) unknowns and (p+q) equations in the equations system (25), which is usually non-linear and might need iteration methods for solving the unknowns

Given observations at time t2=t0+2h, …, tN=t0+Nh, we write similar equations systems regarding the p+q moments of Xi with probability density function

| ${{\hat \theta }_i} = \frac{1}{N}\sum\limits_{l = 1}^N {\hat \theta _i^l}, {{\hat \gamma }_i} = \frac{1}{N}\sum\limits_{l = 1}^N {\hat \gamma _i^l}, $ | (27) |

Note that the observation data are the same tree-like data as described in section 3.1, wherefore the Xi is known when we calculate the moments of Xi+1, for i=0, …, N-1.

References

| [1] | Morel J M, Takens F, Teissier B. Parameter estimation in stochastic differential equations[M].Berlin Heidelberg: Springer-Verlag, 2008: 6-10. |

| [2] | Breton A L. On continuous and discrete sampling for parameter estimation in diffusion type processes[M].Berlin Heidelberg: Springer, 1976: 124-144. |

| [3] | Dorogovcev A J. The asymptotic properties of least square estimators for regression coefficients[J].Problemy Peredai Informacii, 1973, 4:49–57. |

| [4] | Prakasa R. On Bayes estimation for diffusion fields[M].North Holland: Statistical Publishing Society, 1984: 575-590. |

| [5] | Pedersen A R. A new approach to maximum likelihood estimation for stochatic differential equations based on discrete observations[J].Scandinavian Journal of Statistics, 1995, 22(1):55–71. |

| [6] | Papaspiliopoulos O, Beskos A. An introduction to modelling and likelihood inference with stochastic differential equations. Great Britain: Warwick, 2011. |

| [7] | Kloeden P E, Platen E. Numerical solution of stochastic differential equations[M].Berlin Heidelberg: Springer-Verlag, 1992: 305. |

| [8] | Milstein G N, Tretyakov M V. Stochastic numerics for mathematical physics[M].Berlin Heidelberg: Springer, 2004: 9-10. |